NAND-SPIN-Based Processing-in-MRAM Architecture for Convolutional Neural Network Acceleration

Image credit: Unsplash

Image credit: Unsplash

Abstract

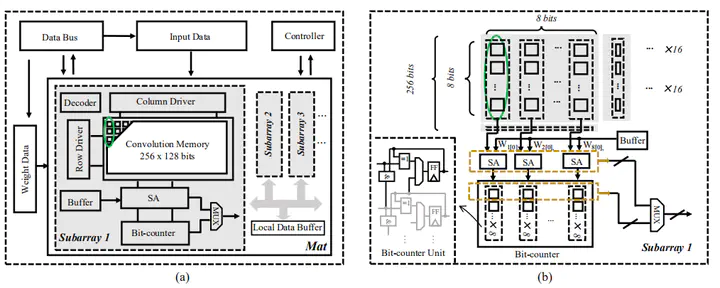

The performance and efficiency of running large-scale datasets on traditional computing systems exhibit critical bottlenecks due to the existing “power wall” and “memory wall” problems. To resolve those problems, processing-in-memory (PIM) architectures are developed to bring computation logic in or near memory to alleviate the bandwidth limitations during data transmission. NAND-like spintronics memory (NAND-SPIN) is one kind of promising magnetoresistive random-access memory (MRAM) with low write energy and high integration density, and it can be employed to perform efficient in-memory computation operations. In this work, we propose a NAND-SPIN-based PIM architecture for efficient convolutional neural network (CNN) acceleration. A straightforward data mapping scheme is exploited to improve the parallelism while reducing data movements. Benefiting from the excellent characteristics of NAND-SPIN and in-memory processing architecture, experimental results show that the proposed approach can achieve ∼2.6× speedup and ∼1.4× improvement in energy efficiency over state-of-the-art PIM solutions.